CONTENTS

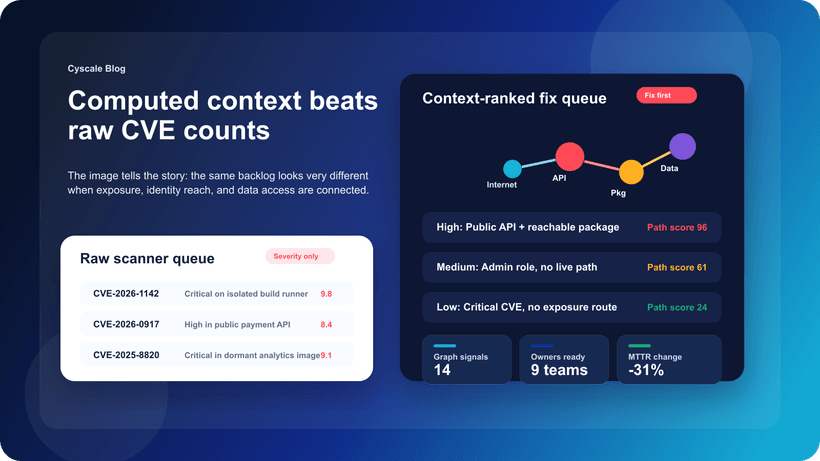

Why Computed Context Beats Raw CVE Counts in Cloud Vulnerability Prioritization

CEO & Founder at Cyscale

Friday, March 6, 2026

Updated: March 21, 2026

If your team is dealing with code, vulnerability, container, or runtime risk, Cyscale helps you cut through alert volume and understand what actually matters in production. You get practical context, faster triage, and a cleaner route to remediation.

Security teams are not short on findings. They are short on confidence.

That is the real problem behind most vulnerability backlogs. A team can have ten thousand open findings and still not know which ten deserve attention this week. This is why cloud vulnerability management keeps stalling even in organizations that have already bought the right scanners.

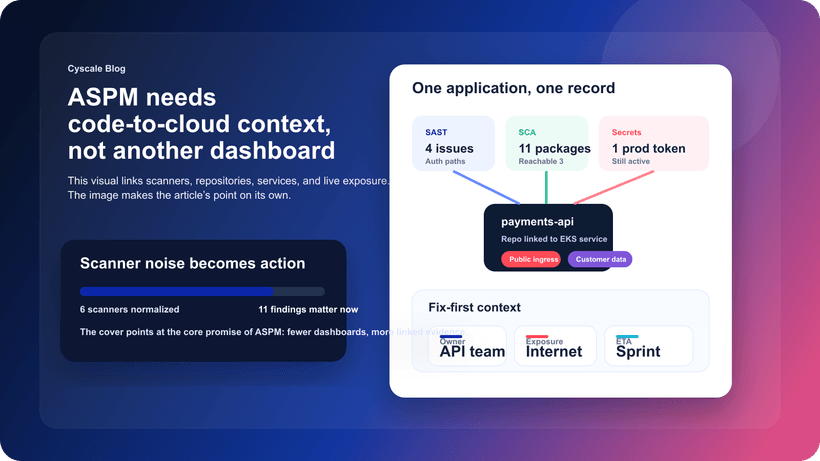

The scanners are usually not the bottleneck. The missing piece is context.

Recent work in the Cyscale platform around graph-derived computed properties is important for exactly this reason. Instead of treating each vulnerability as a flat record, it becomes possible to evaluate it in relation to where it runs, what can reach it, what identities are attached to it, and what business systems sit behind it. That is a much better basis for prioritization.

Source inspiration: Leveraging The Knowledge Graph

Why raw CVE counts create bad decisions

A raw CVE list answers one narrow question: what vulnerable components exist?

That matters, but it does not answer the question security leaders and engineering managers actually care about: what is most likely to turn into an incident?

The problem with raw counts is that they flatten everything:

- A critical package in an isolated internal batch workload looks more urgent than a moderate-severity issue on a public customer-facing service.

- A package with a frightening score but no realistic access path can crowd out a vulnerability that sits behind an exposed API and a permissive identity.

- Large teams start measuring success by ticket closure volume because the queue no longer reflects business reality.

Once that happens, remediation becomes a reporting exercise instead of a risk-reduction exercise.

What computed context adds

Computed context is simply derived information that changes the meaning of a finding.

In cloud environments, that usually means bringing together several signals:

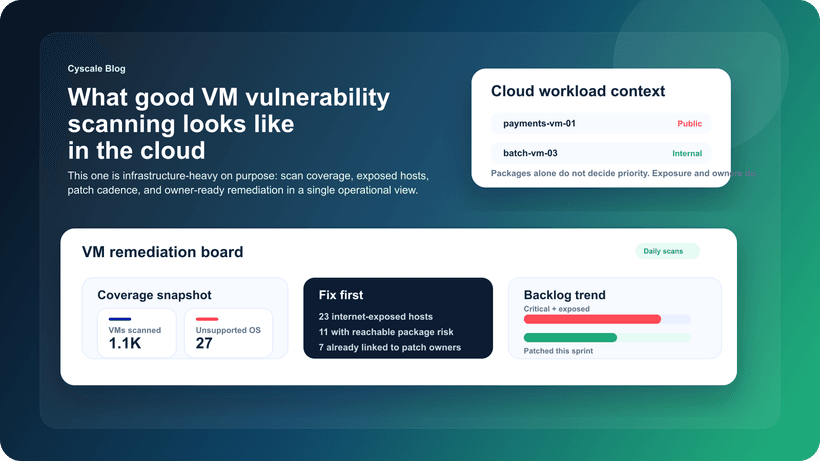

- Reachability: can the workload, container, VM, or service be reached from the internet or from another risky segment?

- Identity breadth: do attached roles or service accounts expand blast radius if the asset is compromised?

- Data proximity: does the path end near regulated, customer, or otherwise sensitive data?

- Business criticality: is this tied to a production service, a revenue path, or a crown-jewel system?

- Runtime relevance: is the vulnerable component actually deployed and active in an environment that matters?

The important thing is not to create yet another score. The important thing is to make the prioritization explainable.

An engineering team should be able to read a finding and understand, in plain operational language, why it was promoted:

- The workload is internet-facing.

- The identity attached to it has broad access.

- The service sits on the same path as sensitive data.

- Breaking this path removes a real route to impact.

That is what good context does. It turns prioritization into something people can defend.

Why graph context matters more than isolated enrichment

You can enrich findings in many ways, but graph context is especially useful because cloud environments are relationship-heavy systems.

Assets do not exist alone. They sit inside VPCs, clusters, accounts, subscriptions, resource groups, projects, trust relationships, and application dependencies. The question is rarely whether one thing is vulnerable. The question is how that thing connects to everything around it.

This is why graph-derived context changes the quality of the answer:

- It shows whether exposure is direct or transitive.

- It shows whether identity relationships increase risk.

- It helps explain why one fix may break multiple risky paths at once.

- It makes cross-cloud and cross-account reasoning easier than reading disconnected tables.

In practice, that means a finding can move from “high severity” to “fix this today” for reasons that are immediately visible.

What good prioritization looks like in practice

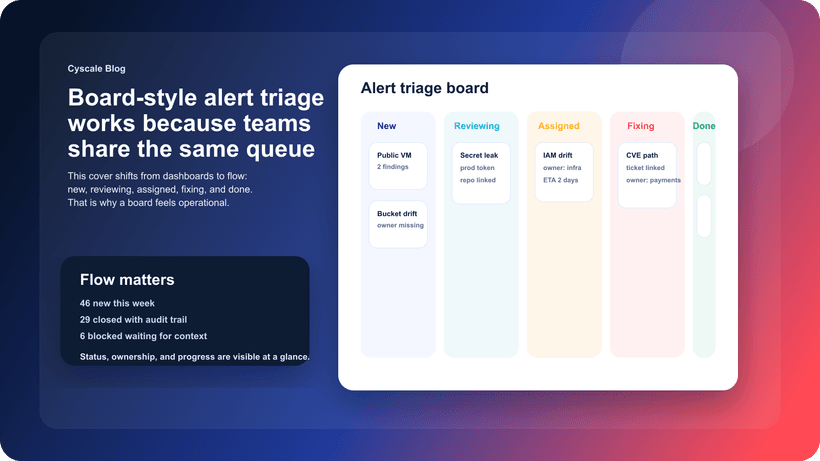

A good prioritization workflow is not complicated. It is disciplined.

Start with the smallest high-impact queue

Most teams improve faster when they create a fix-first queue instead of arguing over the full backlog. That queue should include:

- Internet-facing assets with confirmed vulnerable packages

- Workloads attached to broad or excessive permissions

- Assets near sensitive data stores

- Repeated issues that represent systemic engineering drift

Rank by path-breaking value

If fixing one workload, role, or policy breaks several risky paths, it should often outrank a finding that only improves hygiene in isolation.

That is one of the biggest changes teams notice when they move from CVE counting to contextual prioritization. They stop thinking in terms of “which package is scariest?” and start thinking in terms of “which fix reduces the most real risk?”

Keep the explanation visible

If a team cannot explain why a finding is in the top queue, the prioritization model is too opaque. Every promoted issue should show the context behind the decision.

Common mistakes teams still make

Even after adopting better context, there are a few traps that show up again and again:

Mistake 1: keeping scanner silos intact

If vulnerabilities, posture findings, identities, and data exposure still live in separate operational views, teams are forced back into manual correlation. The priority model becomes theory instead of workflow.

Mistake 2: optimizing for the wrong KPI

Closing the largest number of tickets is not the same as reducing the most risk. Teams should track metrics such as:

- Mean time to triage high-context findings

- Mean time to remediate reachable high-risk issues

- Percentage of high-risk paths broken within SLA

- Repeat rate for the same root cause

Mistake 3: assuming “critical” always means “first”

Critical CVEs deserve attention, but not every critical issue belongs at the top of the queue. Context still matters.

How this connects to Cyscale

This is where Cyscale’s model is useful. We have spent years building around the idea that cloud risk is relational, not flat.

The recent release-note work on computed properties makes that more visible in day-to-day operations. It helps teams understand not just that a finding exists, but why it should be promoted or deprioritized.

That matters across several workflows:

It also matters for smaller teams. In lean environments, security success often depends less on how many findings you can detect and more on how clearly you can narrow the queue to work that makes a measurable difference.

A practical rollout plan

If you want to make this operational, keep it simple:

- Define the signals that should automatically elevate a finding: exposure, identity breadth, data access, production placement.

- Build a weekly fix-first queue from those signals.

- Review whether engineering teams can explain why every item is there.

- Track whether the queue is shrinking because risky paths are being removed, not because tickets are being closed mechanically.

- Feed repeat patterns back into stronger controls, better defaults, and clearer ownership.

Final thought

The future of vulnerability management is not “more scanning.”

It is better context, better explanations, and better decisions.

That is what helps security teams move from backlog management to real risk reduction.

Further reading

Cloud Storage

Misconfigurations

Build and maintain a strong

Security Program from the start.

Cloud Compliance in

2026: An In-Depth Guide

The whitepaper talks about ISO 27001, SOC 2, PCI-DSS, GDPR, HIPAA.

Download WhitepaperShare this article

CEO & Founder at Cyscale

Ovidiu brings his cybersecurity experience to the table, innovating with AI-powered solutions that address the real-world challenges of cloud security. His approach is focused on providing SaaS companies with the tools they need to navigate the complexities of compliance and grow securely within their regulated environments.

Stay Connected

Receive our latest blog posts and product updates.

TOP ARTICLES

Cloud Security

Our Compliance toolbox

Check out our compliance platform for cloud-native and cloud-first organizations:

LATEST ARTICLES

What we’re up to